Tag: opencv

image histogram

python png

OpenCV (cv2) can be used to extract data from images and do operations on them. We demonstrate some examples of that below:

Related courses:Image properties

We can extract the width, height and color depth using the code below:

import cv2 |

Access pixel data

We can access the pixel data of an image directly using the matrix, example:

import cv2 |

To iterate over all pixels in the image you can use:

import cv2 |

Image manipulation

You can modify the pixels and pixel channels (r,g,b) directly. In the example below we remove one color channel:

import cv2 |

To change the entire image, you’ll have to change all channels: m[py][px][0], m[py][px][1], m[py][px][2].

Save image

You can save a modified image to the disk using:

cv2.imwrite('filename.png',m) |

Download Computer Vision Examples + Course

template matching python from scratch

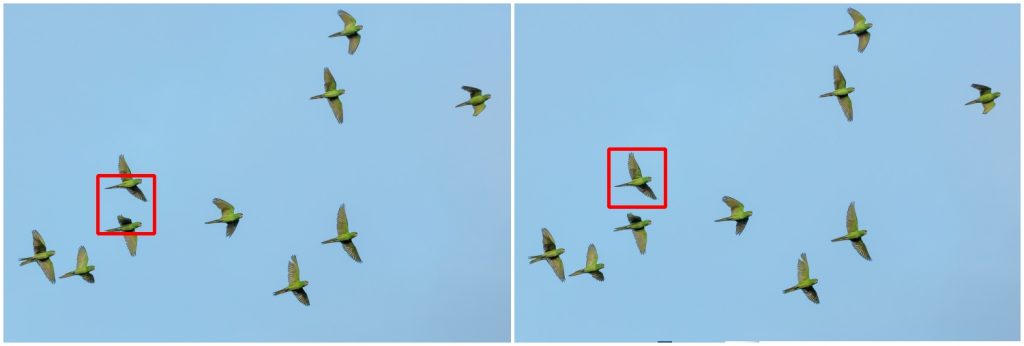

Template matching is a technique for finding areas of an image that are similar to a patch (template). Its application may be robotics or manufacturing.

Related course:

Master Computer Vision with OpenCV

Introduction

A patch is a small image with certain features. The goal of template matching is to find the patch/template in an image.

Template matching with OpenCV and Python. Template (left), result image (right)

Template matching with OpenCV and Python. Template (left), result image (right)

To find them we need both:

- Source Image (S) : The space to find the matches in

- Template Image (T) : The template image

The template image T is slided over the source image S (moved over the source image), and the program tries to find matches using statistics.

Template matching example

Lets have a look at the code:

import numpy as np |

Related course:

Master Computer Vision with OpenCV

Explanation

First we load both the source image and template image with imread(). We resize themand convert them to grayscale for faster detection:

|

We use the cv2.matchTemplate(image,template,method) method to find the most similar area in the image. The third argument is the statistical method.

This method has six matching methods: CV_TM_SQDIFF, CV_TM_SQDIFF_NORMED, CV_TM_CCORR, CV_TM_CCORR_NORMED, CV_TM_CCOEFF and CV_TM_CCOEFF_NORMED.

which are simply different statistical comparison methods

Finally, we get the rectangle variables and display the image.

Limitations

Template matching is not scale invariant nor is it rotation invariant. It is a very basic and straightforward method where we find the most correlating area. Thus, this method of object detection depends on the kind of application you want to build. For non scale and rotation changing input, this method works great.

You may like: Robotics or Car tracking with cascades.

Download Computer Vision Examples + Course

face detection python

In this tutorial you will learn how to apply face detection with Python. As input video we will use a Google Hangouts video. There are tons of Google Hangouts videos around the web and in these videos the face is usually large enough for the software to detect the faces.

Detection of faces is achieved using the OpenCV (Open Computer Vision) library. The most common face detection method is to extract cascades. This technique is known to work well with face detection. You need to have the cascade files (included in OpenCV) in the same directory as your program.

Related course

Master Computer Vision with OpenCV and Python

Video with Python OpenCV

To analyse the input video we extract each frame. Each frame is shown for a brief period of time. Start with this basic program:

#! /usr/bin/python |

Upon execution you will see the video played without sound. (OpenCV does not support sound). Inside the while loop we have every video frame inside the variable frame.

Face detection with OpenCV

We will display a rectangle on top of the face. To avoid flickering of the rectangle, we will show it at it latest known position if the face is not detected.

#! /usr/bin/python |

In this program we simply assumed there is one face in the video screen. We reduced the size of the screen to speed up the processing time. This is fine in most cases because detection will work fine in lower resolutions. If you want to execute the face detection in “real time”, keeping the computational cycle short is mandatory. An alternative to this implementation is to process first and display later.

A limitation of this technique is that it does not always detect faces and faces that are very small or occluded may not be detected. It may show false positives such as a bag detected as face. This technique works quite well on certain type of input videos.

Download Computer Vision Examples and Course

Car tracking with cascades

In this tutorial we will look at vehicle tracking using haar features. We have a haar cascade file trained on cars.

The program will detect regions of interest, classify them as cars and show rectangles around them.

Related course:

Master Computer Vision with OpenCV

Detecting with cascades

Lets start with the basic cascade detection program:

#! /usr/bin/python |

This will detect cars in the screen but also noise and the screen will be jittering sometimes. To avoid all of these, we have to improve our car tracking algorithm. We decided to come up with a simple solution.

Related course:

Master Computer Vision with OpenCV

Car tracking algorithm

For every frame:

- Detect potential regions of interest

- Filter detected regions based on vertical,horizontal similarity

- If its a new region, add to the collection

- Clear collection every 30 frames

Removing false positives

The mean square error function is used to remove false positives. We compare vertical and horizontal sides of the images. If the difference is to large or to small it cannot be a car.

ROI detection

A car may not be detected in every frame. If a new car is detected, its added to the collection.

We keep this collection for 30 frames, then clear it.

#!/usr/bin/python |

Final notes

The cascades are not rotation invariant, scale and translation invariant. In addition, Detecting vehicles with haar cascades may work reasonably well, but there is gain with other algorithms (salient points).

You may like:

Download Computer Vision Examples + Course